Computing of 2020: Virtualization based on Docker Containers and Kubernetes

In past with the advent of windows, it was possible for one processor to write to a memory location which was in fact being used by Kernel of the OS or by another process as example. Process could halt the total execution of processor as well. So the testing was extremely difficult because things were highly tied together all the way from host to all the applications running on top of that OS and their states. There actually was no isolation until the windows 95 came into picture 25 years back: it came with virtual addresses spaces and the feature called pre-emptive multitasking. https://www.windowsdatarecoverysoftware.com/win95/. This provided OS the feature to expose address space as virtual thing and actual addresses are traslated by the processor to somewhere else. This allowed any process which have coding issues, or bugs stop from brining the whole operating system down. The OS is able to bring down this process by forcefully taking the CPU from the process. This was made possible by the help of processor feature basically called virtual machine. There V8086 mode introduced by processor which helped enable this feature https://en.wikipedia.org/wiki/Virtual_8086_mode.

The virtual 8086 mode eventually after decades transformed into a virtualization technology where multiples OS could share the processor and run in total isolation to the other OS and could not affect the whole server unconditionally. Vmware and Hyper-V became major players and it helped both the production in terms of consolidation of workloads on same physical host server as well as Dev environment because rolling back and spinning up environments became feasible in terms of space and time.

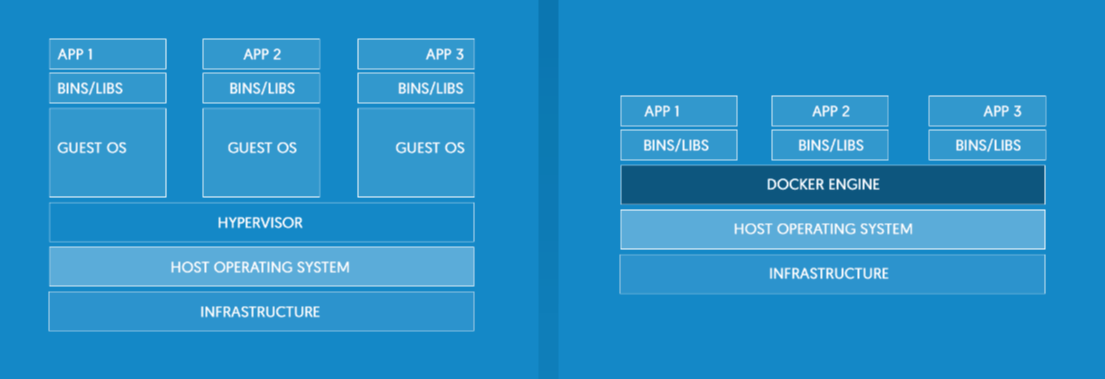

Soon it was realized that repeatition of OS kernel in each Virtual running on same host not just has its own disk space and CPU utilization cost, it is just not very needed. Docker came with a concept of sharing kernel in a way that each process is totally isolated from other process and see the OS totally independent to another process. That process is called a container. So cost of spinning a new process is much less also and very less overhead on disk and CPU so it became an easy success.

In order to support container definitely the support from the operating system was also essential and its over size also became an important consideration. Linux in its current state could not be containerize either and needed some changes. Microsoft had to go to major changes because GUI based bulk load servers definitely could not be containerized. They came up with stripped down OS. So the servers went into below changes

Docker vs Virtual Machine

Docker is process virtualization where a process sees the host as isolated from all other processes on same host. Virtual machine is Operating System virtualization where multiple OS runs on same hardware without knowing the existence of each of other.

Disk Sizes

Microsoft Windows Full edition (10 GB install size) while windows server core edition takes lesser space i-e around 5-6 GB. There is newest edition to the family called Nano-Server which is the minimal server edition from Microsoft. Total Install size of this OS is around 250MB on disk while the source docker image is approx 100mb only.

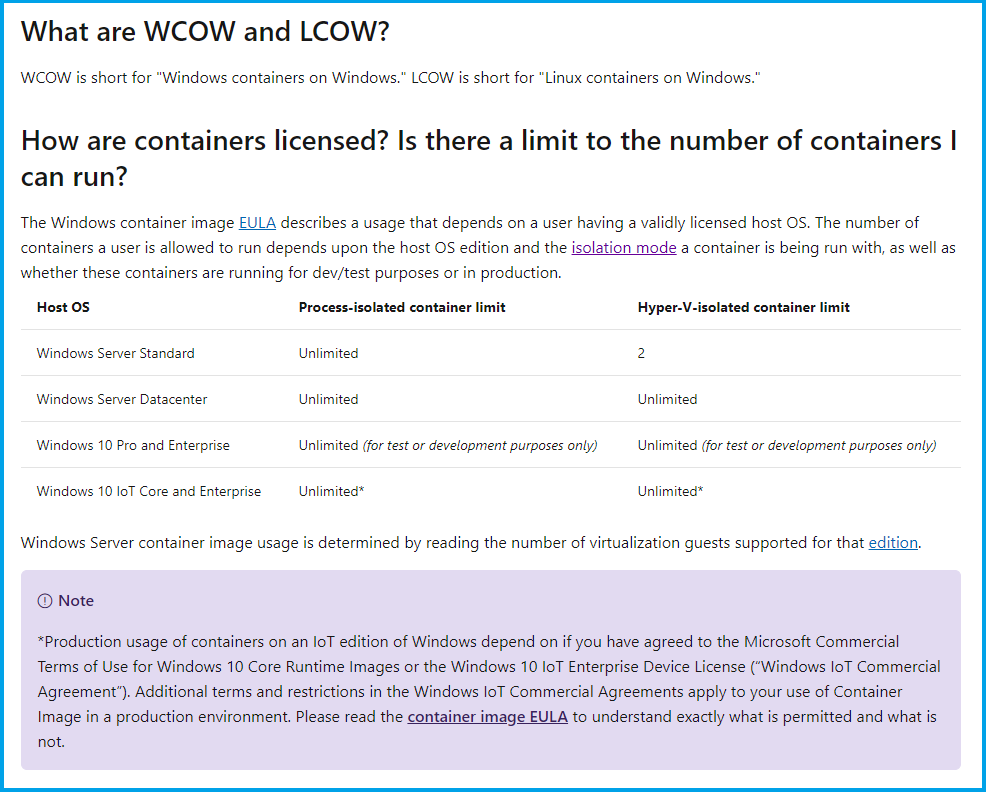

Licensing is very simpler also,

source: docs.microsoft.com:

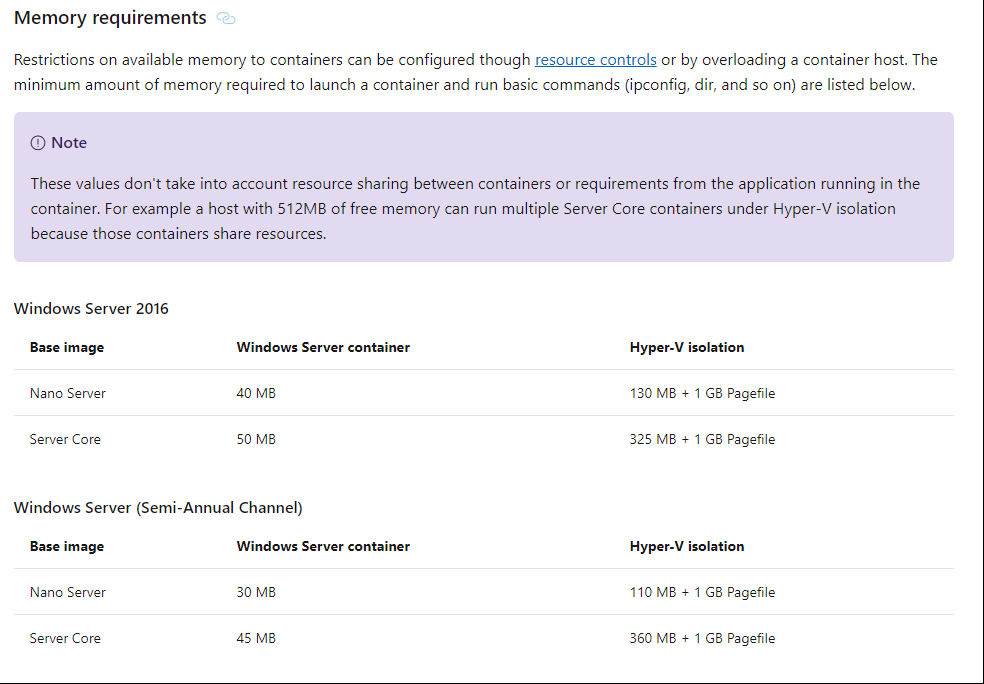

Memory Requirements,

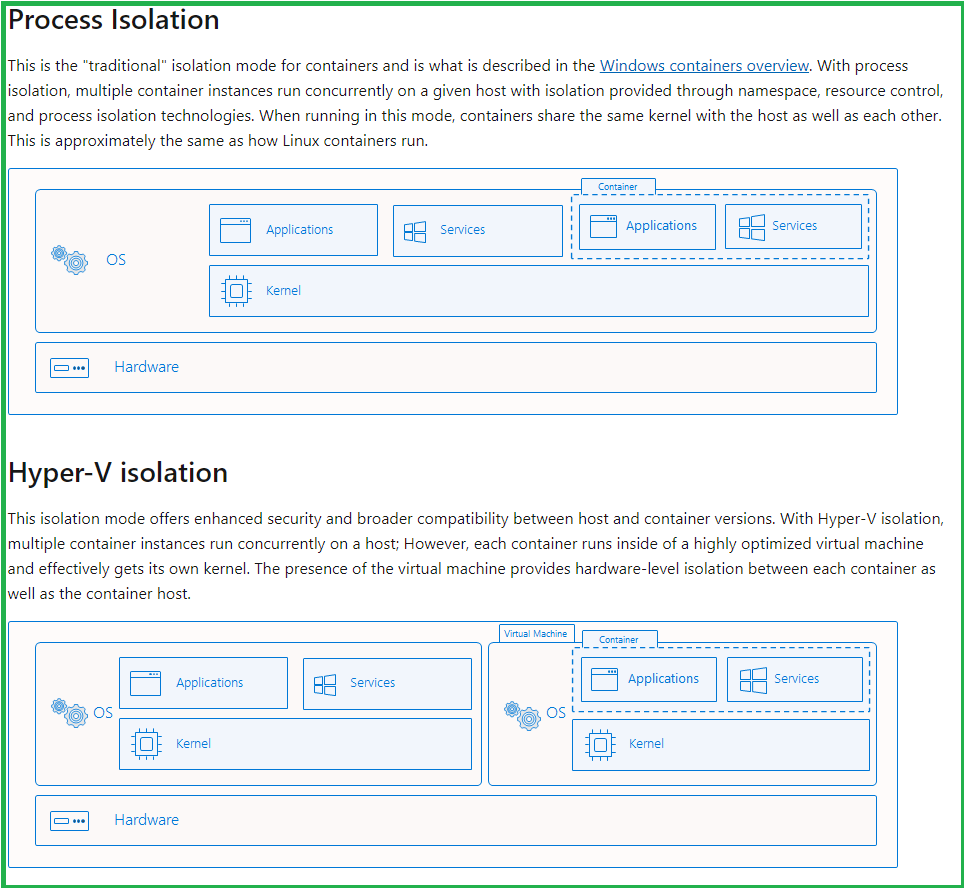

Hyper-V Isolation vs Process Isolation

source: https://docs.microsoft.com/en-us/virtualization/windowscontainers/manage-containers/hyperv-container

Shared Kernel Consideration

Base images are special OS. You need to use the matching base image corresponding to the host OS plus you need to consider the patch level of the HOST OS as well, otherwise you need to use Hyper-V Isolation,

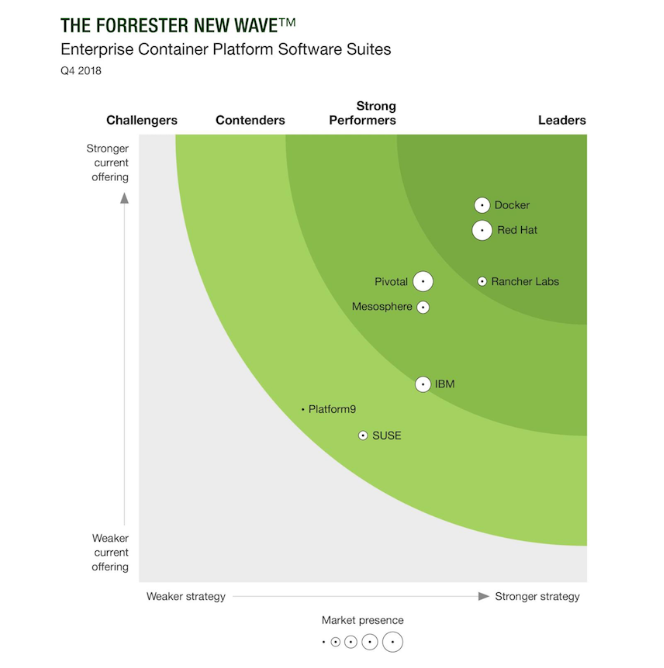

Docker Engine and competing products

Key Take Aways,

> Docker is the leading de facto container standard as well as implementation as of now with MS also integrating it with Windows to provide necessary docker infra to windows servers.

> Container is essentially process isolation. Note to be heedful when used on windows server, if you are using Hyper-V isolation instead, which should not be the case.

> The benefit to DevOps is much better CI/CD pipeline and cost to create new a new environments is much much less and testing is much streamlined

> The benefit to production is consolidation of workloads at much higher scale, rapid provisioning of service. No VM boot time. No disk overhead, No Ram overhead, No Mem overhead

> portability of the solution increases many folds similar to virtual machine but since docker images are even smaller so portability is even more enhanced than VM.

> The layered approach of images through docker file focuses on your customizations derived from the base images which are available in docker hub around the globe so essentially you just are carrying the customization and your binaries or web publish folder to carry your implementation. That really increases portability many folds. you are assured your code will arrangement will run successfully without having to carry a big virtual machine hard disks for example.

> Linux or Windows does not matter much with dot net core becoming cross platform capable and MS putting all efforts on .Net core instead and abandoning .Net FX means dot net core will be main thing. This incase if you service runs on net core.

> New Windows servers are no more bloated with GUI, rather windows Nano servers are 100MB image size and 250MB instance size when executed so you get all the benefits of Linux in there along with standardization of MS world.

> You really don't have to be docker file expert, although its good to know the parameters to fine tune but for most cases, you can get the docker file auto created as part of visual studio which you can carry along in your production and change it if needed.

Referenced Code: Shahid-Roofi-Khan/BlazorDockerSampleForWin2019 (github.com)

How to install Docker on Windows Server: https://docs.microsoft.com/en-us/virtualization/windowscontainers/quick-start/set-up-environment?tabs=Windows-Server